Recently I had a huge task – improve ETL process between MS SQL servers – to transfer near billion of rows every day in a real time. This means, once a master SQL server receives new rows, the rows must be immediately transferred to 8 satellite MS SQL servers.

The back-end. Every MS SQL server running on one Intel Xeon E5-2690 2.9GHz 8 cores CPU, has 384GB of memory and enterprise SSD storage: separate RAID10 for data, index and log files. TempDB running on RAM drive (in memory). The main database table are separated in file-groups per day.

Before the improvement, I found around up to 800.000 rows per minute through-output. Since the amount of data were increasing every single day, the performance were too slow. By the way, the method to move the rows to satellite servers were to use stored procedure and to transfer the rows in chunks up to 1000000 of rows .

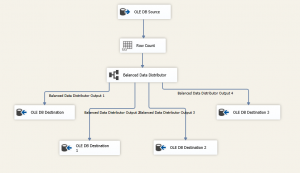

To improve the through-output, the decision were made to use SSIS package instead of USP, since SSIS is probably the best place for ETL processes. And of course to multi-thread the process. So after a lot of tests the best performance we reached using the data flow bellow:

The most important part is Balance Data Distributor. Using the component, the SSIS package were able to write in 4 separate threads into one table. This helped a lot and every additional thread gave additional 500000 of rows per minute through-output (using single thread without the component, SSIS were able to reach up to 1000000 rows per minute). So the total through-output are up to 3 million of rows or 1.5GB of data per minute in the real time from the master MS SQL server to 8 satellite MS SQL servers.

Hope this will help someone to save a lot of time and provides ability to have really fast solution to move huge amount of data between servers, etc. prepared in a short time.

If you need any help on details, leave a comment or write me an email!

Nice! Thanks Sauliau.

Nice! How does DefaultBufferMaxSize setting impact? For example, we change 10MB to 1GB then timing process will be decrease?

Good point! At the moment it’s left as a default value, but this will be reviewed and tested latter.

What did you use to get the tempdb into ram?

Currently we are using Primo Ramdisk.

Today. I learned a new knowledge from you.

Thanks.

Thanks Saulius. I aspire to become a Senior database administrator, and even if i still a very junior for the moment. I’ve thought to read this article anyway hoping it will bring me something and it was eventually the case, although i don’t deeply understand it. So thanks for that. I really need advices to well handle my career. So i’ll be very glad if i sometimes get some of you. Good job, thanks again.

Información excelent

Good article. Is the Balanced data distributor component available for free or is it made available via some third party?

The component is free and you may download it directly from microsoft site: https://www.microsoft.com/en-us/download/details.aspx?id=30147

AFAIK the component included in the standard components list starting from SQL2016

Question please, this shows 4 destination connections, and each branch will yield thru 500k rows per minute (rpm), or are you saying the 0 branch runs at 1000K rpm, and 1, 2, 3 run at a steady 500k rpm to their destinations ?

You say output is distributed to 8 target servers from a single source server, via a local connect abd ssis is running on that source server ?

Balance data distributor (BDT) split incoming amount of rows in equal chunks to all destinations. This means, when running with 4 destinations the data goes at the same speed to all branches. Of course, if you will compare one destination which may run at 1000K rpm without BDT to one of 4 destinations branch 0 for example, which will run at around 500-800K rpm, this will look like BDT slowing down the speed. But since with BDT we are running with 4 destinations, so we run at 4 times 500-800K rpm and this are ways faster compare to single destination. In other words, we are able to multi thread insert to the same table.

SSIS I’m running stored on completely separate server, this means non on local (source) or abd destination servers.